The Hive: A Human and Robot Collaborative Building Process

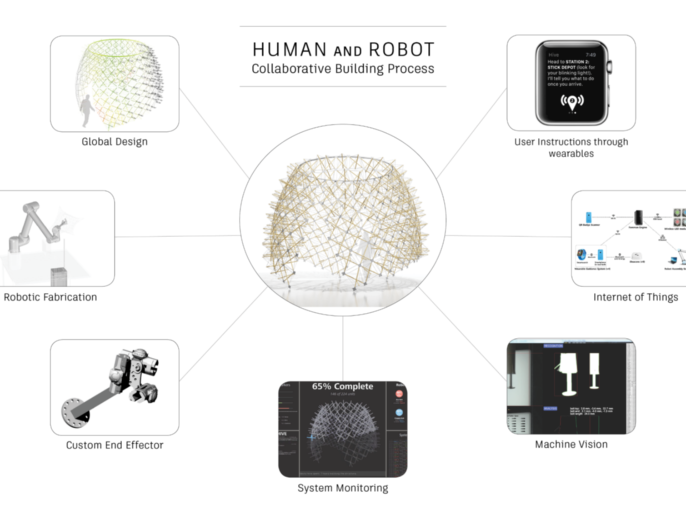

The Hive Pavilion exhibited at Autodesk University (2015) investigated whether untrained workers and industrial robots could work collaboratively together towards the common goal of fabricating and assembling an architectural scale structure through the utilization of computational design, wearables, and interconnected devices. Though many narratives promote the idea that robots will displace humans in the workforce, Hive set out to challenge that assumption and propose an alternative view of human and robot collaboration; where the dexterity and cognitive abilities of humans can augment the precision and repeatability of robots to enable previously impossible tasks.

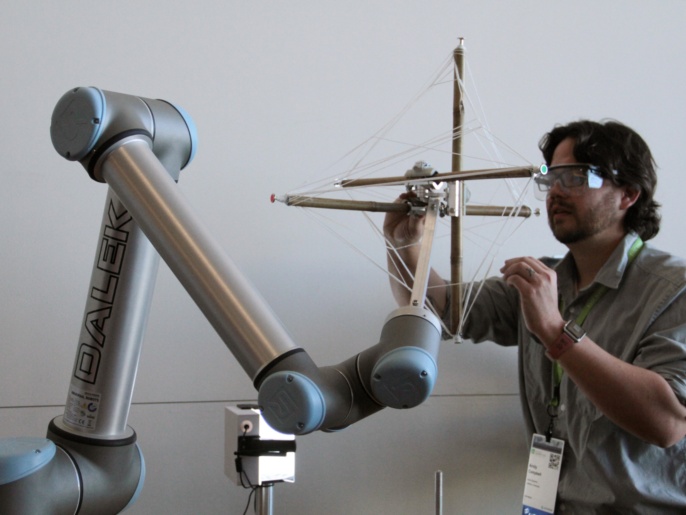

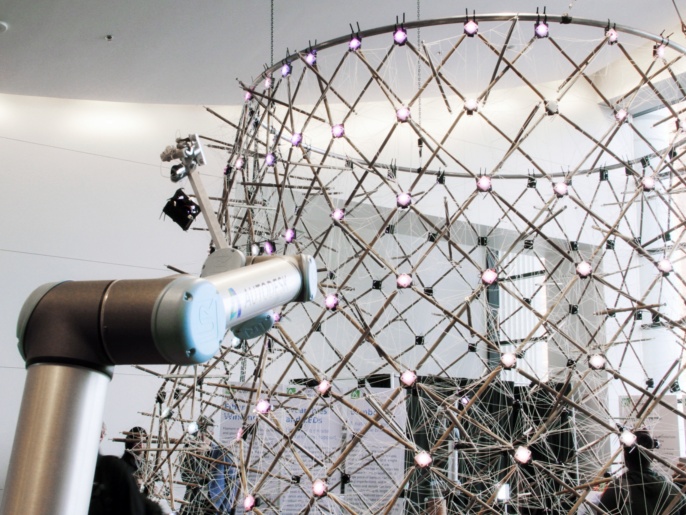

Hive was developed as a multi-disciplinary collaboration between several teams: the Applied Research Lab of the Office of the CTO of Autodesk, The Living, an Autodesk Studio, the Autodesk User Interface Research team and the Institute for Computational Design (ICD) at the University of Stuttgart. These teams developed a collaborative building process and fabrication system as well as a custom app for the exhibit. The actual structure was built over the course of three days by exhibit attendees working together with Universal Robots collaborative UR10s at the Venetian Conference Centre in Las Vegas. Significantly, these attendees had no prior knowledge of the system and had never previously worked with a robot.

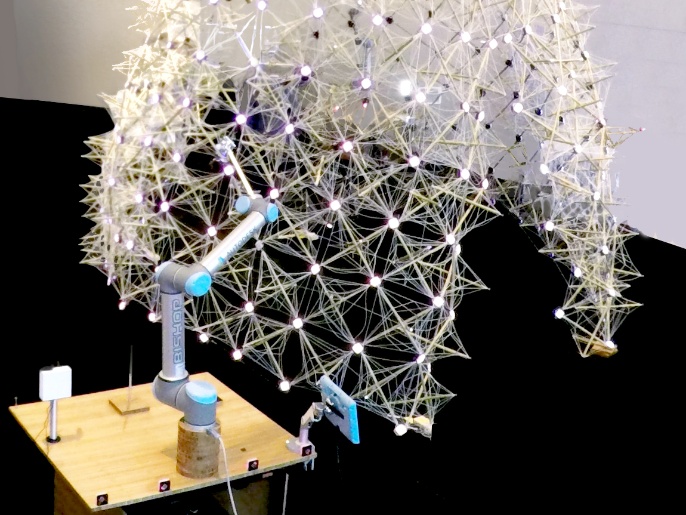

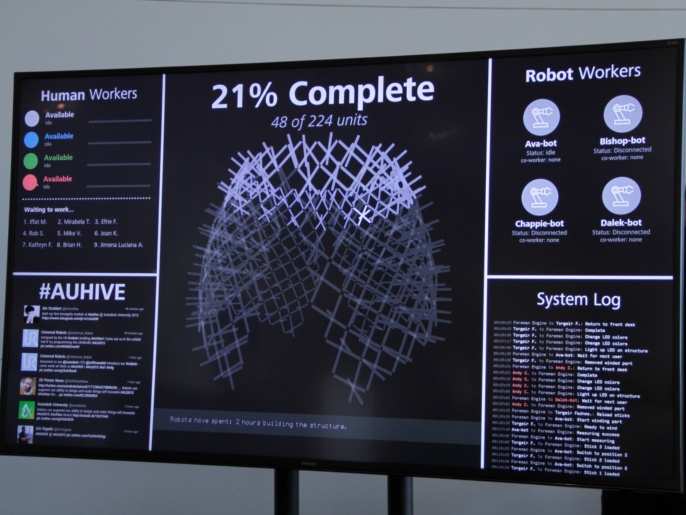

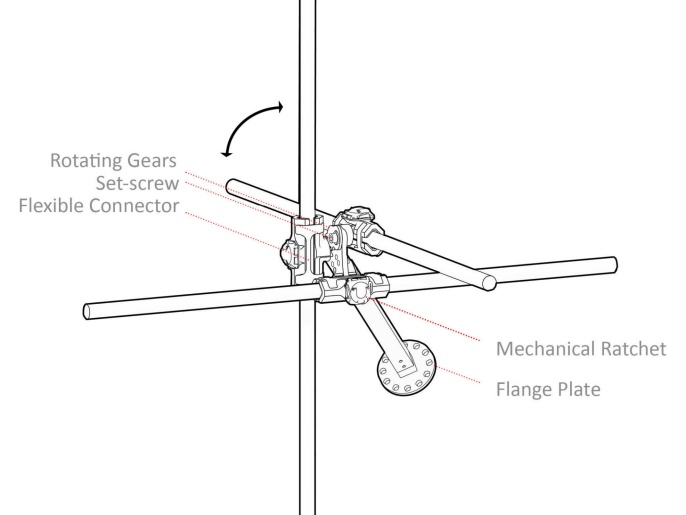

At the exhibition, an interactive fabrication and assembly process facilitated the production of each of the 224 unique tensegrity modules. These modules, when aggregated, form a pre-designed doubly-curved surface. The term “tensegrity” was originally coined by Buckminster Fuller, referring to a minimal structural system whereby rigid compression elements, in this case bamboo, can be held in place without touching one another by secondary tension elements. Bamboo was selected as the base material for the compression structure because it is ubiquitous, strong and lightweight but its natural variation makes it ill-suited for traditional automation. However, here the unique geometry of each module of the global design ensured that the structure would take the desired final form without any human oversight.

After referencing a database with the geometric information for each module, the robot was programmed to move to an exact position in space for each bamboo element, allowing the human to fasten the bamboo pieces within a custom designed, CNC milled end effector. This process instrumentalized the robot’s precision, but the human’s dexterous ability to tighten a mechanical ratchet around the bamboo’s uneven circumference. The robot would then execute a custom scanning routine using image analysis to recalculate the exact position of each bamboo tip, allowing the robotic motion paths to be dynamically recalculated and uploaded in response to tolerances from material variation and human error. A networked fiber layout of waxed thread was then wound around the tips of the bamboo by the robot, providing the necessary tension to hold the bamboo in place. A tension control mechanism, inspired by dancing bar tension mechanisms in industrial extrusion or rolling applications, maintains an approximately constant tension on the fiber during the winding process. This fabrication technique is derived from previous research at the ICD and the ITKE at the University of Stuttgart, which investigates how filament materials such as carbon and glass fiber can be wound around minimal formwork to achieve highly differentiated and structurally performative structures.

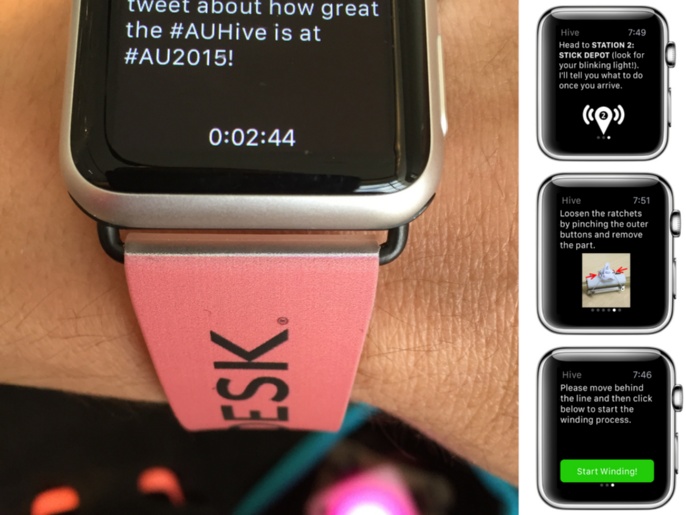

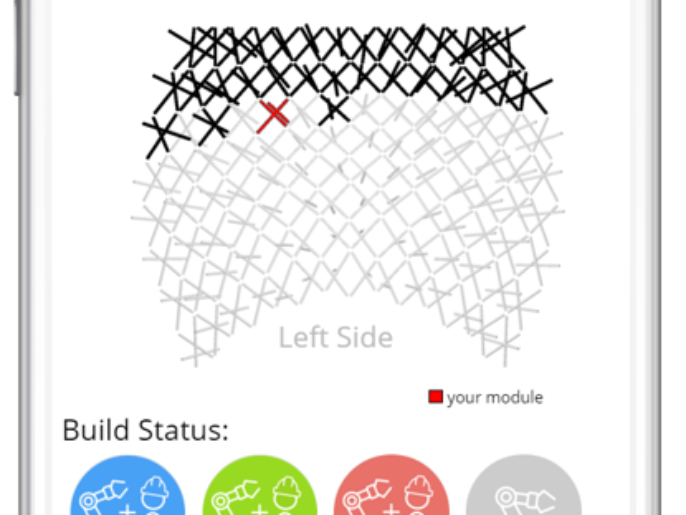

As a live building process, the exhibition demonstrated the potential of computation and wirelessly connected devices in coordinating multiple users and activities. The overall assembly was monitored by an intelligent computational engine, the “foreman engine”, which tracked the build status of the assembly and the available workers and robots. The build space was equipped with iBeacon location sensors which would help determine the precise location of each user in the space. Just-in-time directions for each user were delivered on an Apple watch: by advancing through the app, the user would navigate the construction space, locate building materials, and respond to queries which verified that processes were complete and safety protocols were met. Addressable LEDs embedded within the completed parts would additionally light up with specific colors to signal to the user where to put the module on the growing assembly, demonstrating the potential of embedded intelligence in building materials.

Though six axis robots in industrial settings are generally programmed to iteratively execute identical tasks, Hive demonstrated that 6-axis arms can be deployed in a more dynamic assembly line in which humans and robots are working together side by side safely, producing unique rather than standardized elements. Technologies in sensing and wearables provide incentive to reconsider existing safety protocols in human and robot collaboration as well as the fundamental separation of human and robot tasks in the execution complex processes.

PROJECT TEAM

Autodesk Applied Research Lab

Concept Development, Project Management

Maurice Conti, Heather Kerrick, David Thomasson, Evan Atherton, Nicholas Cote, Maurice Conti, Lucas Prokopiak, Arthur Harsuvanakit

Autodesk Research, User Interface Research & Research Transfer Groups

Interaction Development

Tovi Grossman, George Fitzmaurice, Justin Matejka, Fraser Anderson, Ben Lafreniere, Steven Li, Nicholas Beirne, Madeline Gannon, Thomas White, Andy Nogueira

The Living - An Autodesk Studio

Design System Development

David Benjamin, Danil Nagy,James Stoddart, Ray Wang, Dale Zhao

Institute for Computational Design and Construction, University of Stuttgart (Prof. Achim Menges)

Material System & Robotic Fabrication Development

Lauren Vasey, Long Nguyen, Thu Phuoc Nguyen,Tobias Schwinn

Marcelo Coelho Studio

LED System Development

Marcelo Coelho

Funding

Autodesk

UR10 Collaborative Robots provided by Universal Robots

Acknowledgements

Thanks to the students and visiting researchers who prototyped the project in the ITECH Robotics course: including Thu Nguyen Phuoc, Riccardo Manitta, Andres Obregon, Dongil Kim, Seo Joo Lee, Michael Sveiven, and Jenny Shen. And thanks to Digital Design Unit - TU Darmstadt, directed by Prof. Dr.-Ing Oliver Tessmann, for the generous use of their facilities for prototyping.